Gemini Gem Hallucination: The RAG Architecture That Explains It (7 Patterns That Fix It)

What Google's Documentation Does Not Tell You

Gemini Gem hallucination is real, and last month, I fabricated an entire client engagement. I'm not exaggerating about this Google Gemini Gems RAG at all.

We built a Gem to draft RFP responses using our project portfolio, certifications, and case studies. A prospect asked about our Salesforce implementation capabilities, and the Gem pulled real projects, real outcomes, exactly what we wanted.

Then a different prospect asked about legal tech.

The Gem invented three legal technology projects we never delivered, made up URLs that go nowhere, and cited a team member who doesn't exist. Eight knowledge files with our real portfolio sat right there. The Gem skipped all of them.

This is one of the most concerning AI hallucination examples I’ve encountered.

I spent almost two full days trying to find out what exactly the problem was and here's what I found: Google's documentation implies that knowledge files become part of the conversation context alongside your system instructions.

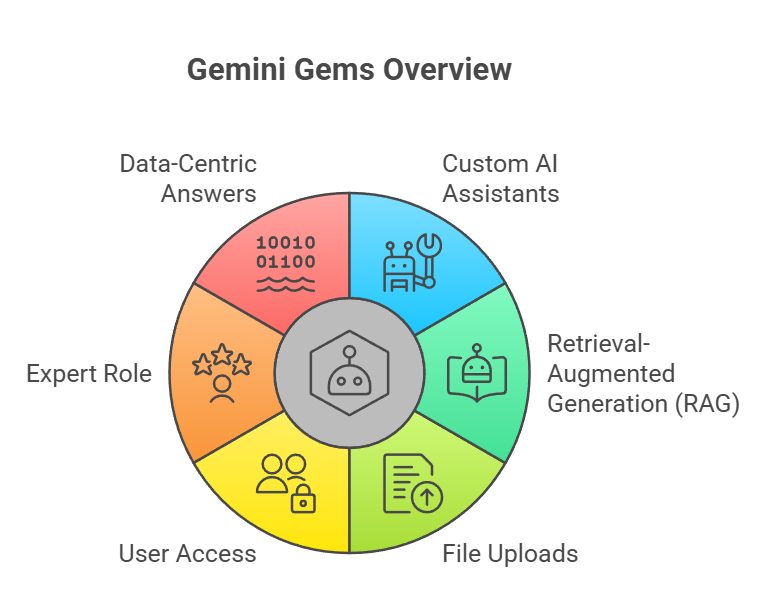

That is deceptive for Gems. There is absolutely no loading of the files into context. They are divided into chunks, transformed into vectors, and examined for each query. In reality, retrieval-augmented generation powers your Gem. Google doesn’t call it RAG. I think they avoid the term, but that’s exactly what it is.

And I'm not the only one who hit this. Google Support forums have been lighting up. Users report Gems ignoring knowledge files entirely. There's a January 2026 regression where Gems flat-out stopped referencing uploaded documents.

Same story everywhere. Once you understand why, though, you can work with it.

Why Does Gemini Gem Hallucination Happen?

Every turn, your system's instructions are loaded into context. Knowledge files don't.

They only appear if semantic search determines that your query is "close enough." Otherwise, they sit somewhere else. They index and only appear when a match is discovered by semantic search. Because of this, this system is essentially a RAG system, and the way to avoid Gemini Gem hallucinations is to comprehend the split.

Another thread documents both instructions and knowledge getting ignored.

The Google developer forum even has one titled "CRITICAL BUG: Files retrieval truncated/ignored".

The essential mental model is all about these two layers.

The instructions layer: always loaded

Whatever you type into the "Instructions" field is a proper system prompt. Every turn, every query, the model reads it. No exceptions. So if a fact absolutely cannot be wrong, this is where it lives. Project names. Cert counts. Allowed URLs. Workflow rules.

The knowledge file layer: retrieved on demand

Your uploaded files (max 10) get chunked, embedded as vectors, and stored in an index. Each time someone sends a message, semantic search runs against that index and pulls out matching chunks. Not the whole file.

Just the chunks that score above a relevance threshold.

Got a 20-page case study? The model might see one paragraph from it. Or none.

Retrieval thresholds and the silence problem

Here's the part that burned me. When a query doesn't match your knowledge files well enough, retrieval comes back empty. The model gets no grounding material, so it falls back on training data. Quietly.

No warning, no error, no "I couldn't find relevant information." It just writes something that sounds right. I've come to believe this silent failure is the real problem. Honestly, most hallucination issues I’ve seen come back to this exact thing.

One rule: instructions are your truth layer. Knowledge files are your detail layer.

| Layer | Behavior | What goes here |

|---|---|---|

| Instructions (system prompt) | Always in context, every turn | Hard facts: project names, URLs, stats, cert counts, workflow rules |

| Knowledge files (uploads) | RAG-retrieved, matching chunks only | Long-form detail: case studies, examples, templates, style guides |

My decision rule is blunt: if getting this fact wrong would embarrass us in front of a client, it goes in the instructions. Project names, how many portfolio items we have, and which URLs are real. All in the truth layer. Always present, never gated behind retrieval.

Knowledge files carry depth. Full case studies, gold-standard examples, formatting templates. Stuff the model needs when the query matches, but stuff that won't cause damage if retrieval skips it.

What I’ve outlined here aligns closely with How does advanced RAG architecture improve data retrieval and processing? And what I have applied to fix Gemini Gem Hallucination, explaining here.

Seven Patterns That Provide Gemini Hallucination Fix

These came from weeks of testing in production. Not in theory. Each one fills a particular gap that I discovered while creating a Gem that uses our project portfolio to draft RFP responses.

We now apply the same ideas to everything we build, including conversational AI agents.

Pattern 1: Grounding Directive

The single most impactful fix I found.

Make line 1 of your instructions: "Always reference the attached knowledge files before answering." That's it. Without this line, Gems drift away from uploaded files in longer conversations. With it, adherence goes up significantly.

Pattern 2: Fact Registry

RAG can't return what doesn't match. So I put a structured lookup table directly in the instructions. One line per project: name, tech stack, key outcome, URL.

Ends with "Total: 13 projects. No others exist." This registry bypasses RAG completely. It's always visible to the model. Even on a zero-match query, the model can check the list and see there's nothing else to reference.

Pattern 3: Dual-Attention Placement

I initially put anti-hallucination rules only at the top of my instructions. The model ignored them in long prompts.

Then I read about the "lost in the middle" effect: LLMs attend most strongly to the beginning and end of their context, weakest in the middle.

So I placed the same rule at both positions. Top (line 3): "There are exactly 13 projects. If you write one not in the registry, STOP." Bottom (CONSTRAINTS section): identical rule.

Google's prompt engineering guidance recommends placing critical restrictions near the end of instructions. Dual placement covers both attention peaks.

Pattern 4: Visible Receipt

Before generating the proposal, I make the Gem output a "MATCHED PROJECTS" list with the specific names and source files it found.

If an unfamiliar project shows up in that list, I know it was hallucinated before even reading the proposal.

For the model, the forced step of listing matches before writing creates a reasoning checkpoint that reduces drift during generation.

Pattern 5: Verification Fence

Even when the Gem matches the right project, it sometimes embroiders. Adds a framework I didn't mention.

Inflates an outcome number. So I added a mandatory verification step: "Before writing, confirm every project name, tech stack item, outcome, and URL appears in the PROJECT REGISTRY. Remove anything that doesn't."

The fence catches the embellishment that slips past the initial matching step.

Pattern 6: URL Whitelisting

This pattern came from a specific embarrassment. The Gem invented a URL by combining a real domain with a fake path.

It looked totally legit. Led nowhere. My fix: an explicit whitelist of allowed URL domains, plus "NO URLs" markers on projects that don't have public links.

The instruction says, "Do not invent URLs for projects marked NO URLs." Killed the problem entirely.

Pattern 7: Closure Statement and No-Match Path

Two parts here. The closure stops the model from treating the registry as a partial list.

Without it, the model assumes there might be more projects it hasn't seen and invents them. The no-match path gives the model a way out when nothing fits: "When zero projects match the job domain, omit the portfolio section.

Output: EDITOR NOTE: No [domain] portfolio projects found. I think this might be the most important pattern. Without an explicit escape hatch, the only thing the model can do on a zero-match query is fabricate.

Give it a legitimate alternative, and it uses it.

What Are the Gemini-Specific Prompt Differences?

Coming from Claude and GPT prompt engineering, a few things caught me off guard.

Constraints go at the end. Google's prompt engineering guidance recommends placing your most critical restrictions at the end of the instruction block. So my CONSTRAINTS section sits at the very bottom.

With Claude, I'd put constraints at the top. Gemini wants them last.

Verbosity defaults to low. Early versions of my Gem produced RFP responses that were too thin. Two paragraphs for a response that needed five.

I had to add explicit instructions: "Write substantive responses with specific project details, outcomes, and technical evidence."

Persona adherence runs strong. I set "expert RFP response writer for IT services," and the Gem latched onto that identity hard.

Wouldn't help with anything outside proposal writing. Refused. That's useful for a focused tool, but surprising the first time it happens.

The temperature stays at 1.0 . This one matters if you move from Gems to the Gemini API. Google recommends keeping the temperature at 1.0 for Gemini 2.5+ thinking models. Lower values cause looping and degrade quality. The opposite of what works with Claude and GPT.

Gems don't expose this setting, but it becomes critical once you're on ADK or the API directly.

How Do You Test Whether Your Gem Is Hallucinating?

Don't test the happy path. That proves nothing. Test the gaps.

Cross-domain query: Ask about a domain not in your files. Does the Gem make something up, or hit the no-match path?

URL inspection: Click every URL in the output. Every single one. Are they real?

Name check: Compare every project name, person, and stat against your registry. One name you don't recognize means the model invented it.

Receipt audit: If you're using Pattern 4, read the matched items list first. Anything unexpected is a red flag.

Fastest test I know: ask about a topic you deliberately left out of your knowledge files. If the Gem answers with confidence and specific details, something's broken.

When You Outgrow Gems: Moving to ADK on GCP

The seven patterns above work.

Our RFP Gem went from fabricating entire projects to producing grounded, verifiable responses. But we kept hitting ceilings.

Ten knowledge files maximum.There is no control over retrieval thresholds and not programmatic access to Google Drive.

No way to chain steps or bring in external data sources mid-response. And every workaround meant cramming more logic into a prompt that was already 330 lines long.

So we moved the system to Google's Agent Development Kit (ADK) on GCP. The same two-layer architecture, the same anti-hallucination patterns, but implemented in code instead of prompt hacks.

The Gem was the right starting point.

It lets us prototype the architecture, discover the failure modes, and develop the patterns. If you're building a factual Gem today, start with the seven patterns.

They'll take you further than you'd expect. But if you're hitting the 10-file limit, fighting retrieval accuracy, or needing programmatic control over your knowledge, ADK on GCP is where this architecture scales.

If your team is building AI tools that need to get facts right, whether on Gems or GCP, we've been through this and can help.

Further Reading

Primary Sources:

Custom Gem Ignoring Knowledge Base Files - Google Support, Jan 2026

Gem ignoring instructions - Google Support

CRITICAL BUG: Files retrieval truncated/ignored - Google Dev Forum, Dec 2025

Deep Dives:

Gemini Gems Guide 2025 - AI Fire

Gemini Gems Forgetting Documents - Workalizer

Is it confirmed that Gems use files in context vs RAG? - Reddit r/GeminiAI

Frequently Asked Questions

-

Yes. Knowledge files in Gemini Gems are chunked, turned into vectors, and searched on each query. Your system instructions load into context directly, but knowledge files only show up when the query matches well enough. That makes it a RAG system in practice.

-

Probably a match problem, not an ignored problem. If your query has low similarity to what's in your files, the retrieval system returns weak or zero results. The model falls back on training data. The files are there. They just aren't being pulled.

-

Different products. Gemini Gems are the consumer AI assistants you build at gemini.google.com, with up to 10 knowledge files. The Gemini API File Search tool is a developer API for custom apps. Most Google search results conflate the two, which makes debugging harder than it needs to be.

-

Instructions are always there. Every turn, every query. Knowledge files only show up when the retrieval system matches them to the current query. For anything that has to be right every time, use instructions. For supporting details, use knowledge files.

Let’s Talk

Drop us a note, we’re happy to take the conversation forward 👇🏻