March 2026 Deadline: Is Your Workato Recipe About to Lose 50% of Your Data?

Your recipe ran successfully. Your data is gone.

Starting March 15, 2026, Workato enforces a hard cap of 50,000 rows on custom SQL actions across its database connectors. If your recipe pulls more than that, it won't fail. It won't throw an error. It will silently return the first 50,000 rows and mark the job as Completed. No warning in the job output. No truncation flag. Your monitoring sees a successful run. Your downstream systems, whether that's Salesforce, Snowflake, or a reporting warehouse, receive a partial dataset and treat it as complete.

But here's what most teams are missing: the Workato custom SQL row limit has been active since February 8 for any recipe not already over the threshold. March 15 is when the last grandfathered recipes lose their exemption. If your data volumes crossed 50,000 rows at any point in the last five weeks, truncation may have already started & users could face Workato silent data loss. Your target systems could have gaps right now.

This guide covers how to find affected recipes, why the ones nobody remembers are the biggest risk, and the two migration paths with the task math you'll need to budget for.

What Changed, and When?

Starting March 15, 2026, Workato enforces a hard limit of 50,000 rows on the "Select rows using custom SQL" action. Any recipe returning more than that will have its results silently truncated to the first 50,000 records. Therefore, users must be aware of this Workato custom SQL row limit in depth.

The rollout happened in three phases:

| Date | Change | Who's Affected |

|---|---|---|

| December 1, 2025 | 1 MB query size limit on "Run custom SQL" | All workspaces immediately |

| February 8, 2026 | 50,000 row limit on "Select rows using custom SQL" | New recipes + existing recipes not currently exceeding threshold |

| March 15, 2026 | Hard enforcement: 50,000 row cap | ALL existing recipes, no exceptions |

The February 8 date matters more than most people realize. If you created a new recipe after that date, the limit was already active. If an existing recipe was returning under 50,000 rows but has since grown past it, truncation kicked in without any signal. March 15 only catches recipes that have been consistently above the threshold since before February 8. Those are the last ones still grandfathered.

Workato 50000 row limit itself is reasonable. The enforcement mechanism is the problem. According to Workato's platform limits documentation, recipes exceeding 50,000 rows "will be truncated to this limit." No error, no warning, no indication in job output that rows were dropped.

This follows a pattern. In June 2025, Workato enforced a 100,000 row cap on Lookup Tables with a similar approach: hard enforcement date, grandfathering for existing data above the threshold. Platform limits are getting stricter, and the migration windows are short.

What's at Risk in Your Workspace?

The 50,000 row limit applies to the "Select rows using custom SQL" action across eight connectors: Google BigQuery, JDBC, IBM Db2, Oracle, PostgreSQL, Redshift, Snowflake, and SQL Server. MySQL has the same 50,000 row cap on its "Run custom SQL" action. Related limit: Lookup Tables have been capped at 100,000 rows since June 2025.

One detail that cuts your triage time in half: Workato's custom SQL actions have a configurable row limit field. If your recipe was never adjusted from a low default, it's probably pulling well under 50,000 rows and isn't at risk. The recipes where someone raised or removed that limit to pull larger datasets are the ones to audit.

For everything else, here's the audit:

Filter your workspace by connector. Look for recipes using any of the connectors above with a custom SQL action.

Check job history for the telltale flatline. Open recent runs and look at row counts over time. A recipe that consistently returns exactly 50,000 rows is almost certainly being truncated. That flatline is your signal.

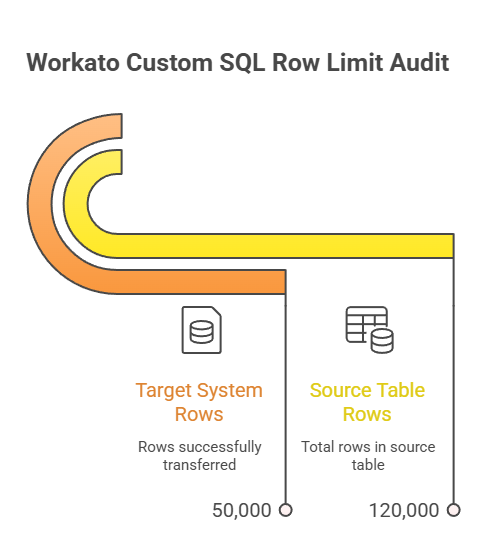

Compare source vs. target on the last full sync. Run a COUNT(*) on your source table for the query in question. Compare it to what arrived in the target system. If your source has 120,000 rows and your target has 50,000, you've been truncated.

Flag anything that ran since February 8. If a recipe crossed the threshold after that date, truncation was already active. Check your target system for gaps going back five weeks.

Prioritize by business criticality. Finance and compliance data first. Then CRM objects: Opportunities, Accounts, Cases.

The Recipes Nobody Remembers

My assessment: the greatest risk isn't the recipes your team actively manages. It's the ones nobody remembers building. A must read to fix your Workato custom SQL row limit error.

Every mature Workato workspace has them. Bulk data pulls created three to five years ago, running on a nightly schedule, never monitored beyond "did the job complete?" Back when the source table had 30,000 rows, nobody thought about limits. But the table grew. Maybe it's 80,000 rows now. Maybe 200,000. The recipe never complained because, until recently, there was no cap to hit.

On March 15, those recipes silently drop everything past row 50,000. And because nobody is actively watching them, the gap could persist for weeks before someone notices the downstream numbers are off.

If you only audit the recipes your team actively manages, you're solving half the problem.

What Are the Two Migration Paths?

Workato documents two alternatives for datasets above 50,000 rows. Both require restructuring your recipe. Neither is a settings change.

Here's the pattern that breaks on March 15: your recipe triggers, runs "Select rows using custom SQL," and processes the full result in the next step. Simple, direct, one query. After March 15, that result silently caps at 50,000 rows.

Two ways to rebuild it.

Path 1: Repeat While Loop (Offset Pagination)

Replace the single query with a Repeat While loop that pages through results in chunks. Each iteration fetches a batch using an offset, processes it, then advances to the next page.

The configuration:

Repeat While condition: Set to List size datapill > 0 (loop continues while rows remain)

Page offset: Set to Index × your page size. The Index datapill is Workato's built-in loop counter. It starts at 0 and auto-increments each iteration. For a page size of 10,000, your offsets advance: 0, 10000, 20000, 30000...

Page size / Limit: Your batch size (e.g., 10,000 rows per iteration)

Inside the loop: Your existing processing logic, now running per page

When the query returns zero rows, the condition fails and the loop exits. Workato caps Repeat While at 50,000 iterations, which at 10,000 rows per page covers up to 500 million rows. You won't hit it.

Best for ongoing syncs, real-time triggers, and recipes where each record needs individual downstream processing. Also the right choice when your downstream app supports batch APIs (Salesforce bulk upsert, NetSuite batch create).

Path 2: Export to File (CSV Export)

Replace the custom SQL action with "Export query result", a Long Action. Same SQL query, but results go to a CSV file instead of the action output. A downstream step parses the file.

The configuration:

SQL: Your existing query, unchanged

Column delimiter: Comma (configurable)

Output: Row count + column names only. The file content is NOT returned in the action output. You pick up the file in the next step.

Downstream: Add a CSV parse step, then your processing logic

This is a Long Action: Workato puts the job on hold and polls for completion, handling queries that run for minutes to hours without timeout. One thing to know: while paused, Workato suspends job sequencing even if concurrency is set to 1. If job order matters for your pipeline, plan for that.

Best for reports, archival, warehouse ETL, and large one-time data loads. Choose this when you're moving bulk data to Snowflake, BigQuery, or a reporting system.

Side-by-Side

| Repeat While (Pagination) | Export to File (CSV) | |

|---|---|---|

| Data volume | Unlimited (paginated) | Unlimited (single export) |

| Recipe pattern | Loop with offset + process per page | Long Action export + CSV parse |

| Task cost (200K rows) | ~40 tasks (20 pages × 2 actions) | ~3 tasks (export + parse + load) |

| Execution | Synchronous per page | Async (polls for completion) |

| Job sequencing | Normal | Suspended during export |

| Best for | Ongoing sync, per-record triggers | Reports, warehouse loads, archival |

| Migration effort | Moderate: add loop + offset logic | Low-Moderate: swap action + add CSV step |

The task math matters. A single custom SQL action today costs 1 task. With Repeat While at 10,000 rows per page, 200,000 rows costs roughly 40 tasks (20 iterations × 2 actions per iteration). Export to File for the same data: roughly 3 tasks. The gap widens with data volume.

One critical detail: batch actions (like Salesforce bulk upsert) count as 1 task regardless of how many rows they process. If your loop processes records individually instead of using batch actions, task consumption scales per row, not per page. For 200,000 rows processed one at a time, that's 200,000+ tasks. Always pair your loop with batch downstream actions.

Factor task projections into your migration estimate. Have the conversation with your Workato account team before you migrate, not after the invoice arrives.

The LIMIT/TOP trap: Don't add a LIMIT or TOP clause to your custom SQL as a shortcut. I've seen teams try this. It interferes with Workato's pagination and can produce incomplete results. Use the built-in Limit field in the action configuration.

How Do You Know the Fix Worked?

Silent truncation created this problem. Don't let silent assumptions about your fix create the next one.

Run the migrated recipe on a known, countable dataset.

Compare source count vs. target count. Exactly. Not close. Not roughly.

Check batch iteration count in the Workato job output. If you batched at 10,000 and have 75,000 records, you should see 8 iterations (7 full batches + 1 partial). Anything less means rows were dropped.

For recipes running since February 8: run a historical gap analysis. Pull target system records and compare against source for the past five weeks.

That last step is the uncomfortable one. But if your recipe exceeded the threshold after February 8, gaps likely already exist. Verification needs to look backwards, not just forward.

The Bottom Line

March 15 is the hard enforcement date for the last grandfathered recipes. But if your data volumes crossed 50,000 rows any time after February 8, the damage may already be done.

Three actions before March 15: audit your workspace (start with the recipes nobody remembers), cross-check target system row counts against source for the past five weeks, and migrate to Repeat While or Export to File depending on your use case and task budget.

Have you found affected recipes already? Thoughts?

If your Salesforce data pipelines run through Workato, we help teams audit and migrate before the deadline. Get in touch →

Further Reading

Official Documentation:

Platform Limits - Workato Docs (complete limit reference)

SQL Server Run Custom SQL Action - Workato Docs (action configuration and limits)

MySQL Run Custom SQL Action - Workato Docs (MySQL-specific 50K row limit)Export Query Result Action - Workato Docs (CSV export alternative)

Database Connector Best Practices - Workato Docs

Migration Implementation:

Repeat While Loop - Workato Docs (offset pagination pattern)

Long Actions - Workato Docs (async execution for bulk operations)

Batch Processing - Workato Docs (batch trigger and action patterns)

Tasks - Workato Docs (task counting rules)

Optimizing Task Usage - Workato Docs (batch actions reduce tasks by 100x)

Enforcement Precedent:

Lookup Table Row Limit FAQ - Workato Docs (June 2025 enforcement pattern)

Community:

Parsing a CSV and Hitting the 50K Row Limit - Workato Community

Frequently Asked Questions

-

No. The recipe succeeds, returns the first 50,000 rows, and the job status reads Completed. No error, no warning, no truncation flag in the output.

-

Workato published documentation and community posts about the change, but there's no in-product alert that flags specific recipes at risk. The audit is on you.

-

No. You're sitting at the threshold. Any growth in source data triggers truncation. Migrate proactively.

-

Different limit, same risk. "Run custom SQL" has a separate 1 MB query size cap effective since December 1, 2025. The 50,000 row limit applies to "Select rows using custom SQL" on most connectors. Note: MySQL applies the 50K cap on its "Run custom SQL" action directly.

-

Export to File costs roughly 3 tasks regardless of data volume. Repeat While costs ~2 tasks per page (e.g., ~40 tasks for 200K rows at 10K per page). Critical: always use batch actions inside your loop. Processing records individually scales per row, turning 200K rows into 200K+ tasks.

Let’s Talk

Drop a note below to move forward with the conversation 👇🏻